IP‑only limits punish everyone behind a shared address; fingerprint‑only limits are easy to game. Dual‑bucket rate limiting, strict per fingerprint and looser per IP, keeps everyday traffic fair while capping total abuse.

During a recent backend interview, I was asked how I would design a rate limiter for a production system. At first, the problem felt straightforward. I explained a simple approach based on identifying users by IP address and limiting requests within a time window. It sounded clean, easy to implement, and something that would work well in most cases.

But as the discussion continued, it became clear that this approach was making an assumption I had not fully thought through. That assumption works in controlled environments, but starts breaking down quickly when you consider how real users actually access systems in production.

Then came a follow-up question:

What happens when multiple users are on the same WiFi network?

That question exposed a flaw in my thinking and led me to rethink the entire design. This post walks through that evolution, from a naive approach to a more production-ready solution.

The Naive Approach

Most implementations start here:

String key = clientIp;

int count = incrementCounter(key);

if (count > LIMIT) {

throw new RateLimitException();

}It's simple:

- No authentication required

- Easy to implement

- Works in isolation

But it assumes:

IP address = user

And that assumption breaks quickly.

What Actually Happens in Real Networks

Inside a WiFi network:

- Devices have private IPs (

192.168.x.x) - Requests go through a router

- The backend sees a single public IP

So your system sees:

User A → same IP

User B → same IP

User C → same IP From the server's perspective, all of these requests look identical. The system cannot distinguish between different users anymore.

The Problem

If your limit is:

3 requests per 90 minutes per IP

Then:

- User A makes 2 requests

- User B makes 1 request

Now the system blocks both users.

👉 One user's activity affects another

👉 Legit users get penalized for someone else's behavior

This is where the gap between a working solution and a production-ready solution starts to show.

First Improvement: Client Fingerprinting

To improve fairness, I moved from using just IP to a composite identity based on request attributes.

String userAgent = request.getHeader("User-Agent");

String acceptLang = request.getHeader("Accept-Language");

String raw = clientIp + "|" + userAgent + "|" + acceptLang;

String fingerprint = sha256(raw).substring(0, 24);Note: truncating SHA-256 to 24 hex chars (96 bits) is usually still safe at small scale, but it is not required. Using the full hash is simpler to justify if key length is not a concern.

Now:

- Same IP + different browser → different identity

- Same IP + different device → different identity

This improves fairness versus IP-only limiting because many users behind the same network are separated more often. It does not fully eliminate collisions, because users on the same network with similar browser/language profiles can still map to the same fingerprint.

But This Introduces a New Problem

Headers like:

User-AgentAccept-Language

are fully controlled by the client.

An attacker can rotate them:

User-Agent: bot-1

User-Agent: bot-2

User-Agent: bot-3

👉 Each request becomes a new identity

👉 Rate limiting becomes easy to bypass

So now we have two extremes:

- IP-based limiting is unfair

- Fingerprint-only limiting is easy to bypass

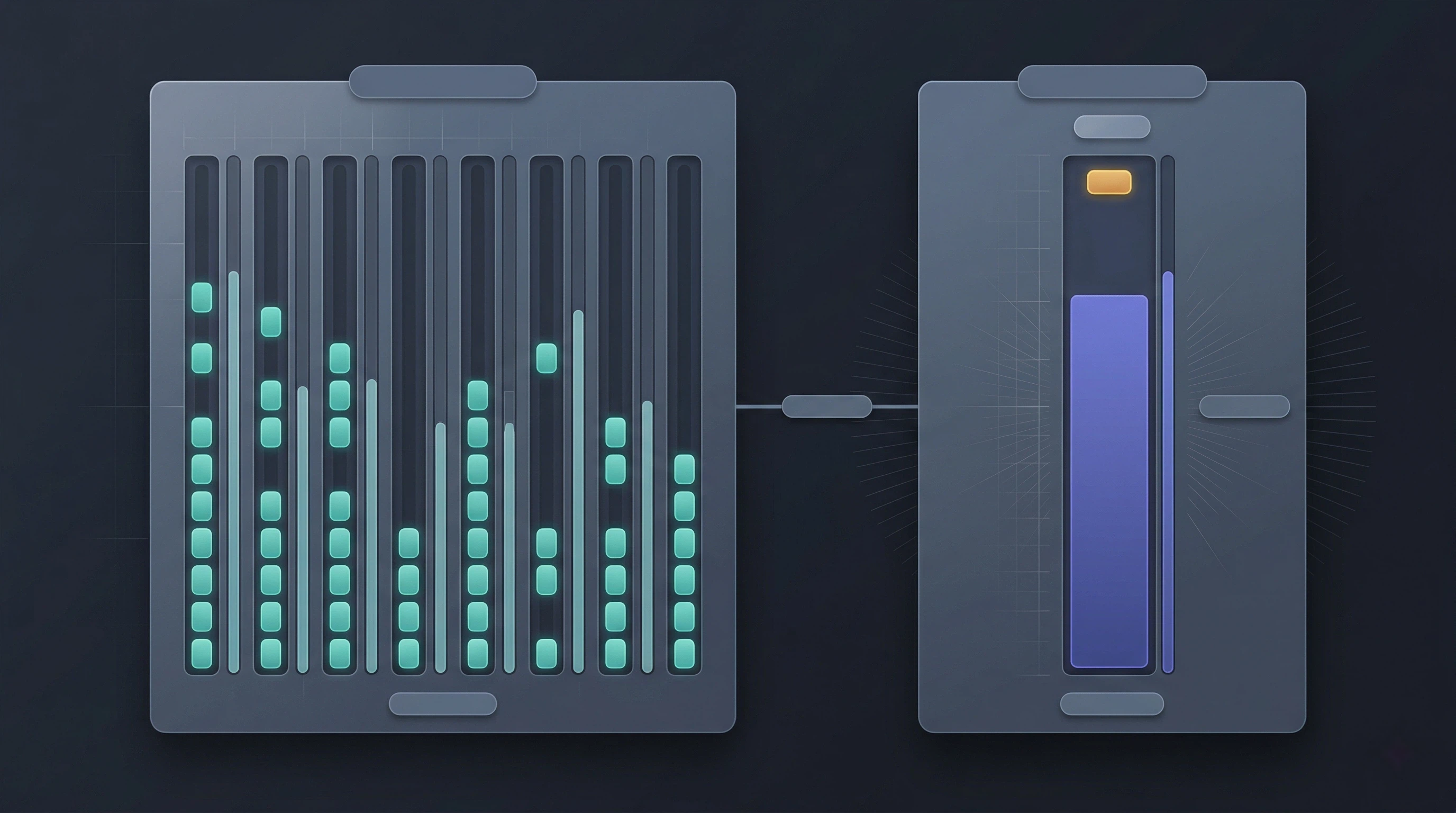

Final Solution: Dual-Bucket Rate Limiting

The solution is to combine both approaches.

For every request, evaluate two independent buckets:

Bucket A → fingerprint-based (fairness)

Bucket B → IP-based (abuse control)A request is allowed only if both pass:

allowed = fingerprintAllowed && ipAllowed;This creates a balance where legitimate users are treated fairly, while abusive patterns are still controlled.

Implementation (Simplified)

From the final implementation:

RateLimitCheckResult fpResult = checkRateLimit("fp:" + fingerprint + ":" + endpoint, fpConfig);

RateLimitCheckResult ipResult = checkRateLimit("ip:" + clientIp + ":" + endpoint, ipConfig);

if (!fpResult.allowed() || !ipResult.allowed()) {

blockRequest();

}Key idea:

- Fingerprint handles user-level fairness

- IP acts as a safety net for abuse

Why This Works

Scenario 1: Legit users on same WiFi

- Different fingerprints

- Shared IP

👉 Fingerprint separates users

👉 IP limit remains high enough to avoid false positives

Scenario 2: Header rotation attack

- Many fingerprints

- Same IP

👉 Fingerprint layer is bypassed

👉 IP layer limits total request volume

Choosing the Right Limits

Important detail:

- Fingerprint limit should be strict

- IP limit should be more relaxed

Example baseline for a low-traffic personal portfolio:

Fingerprint: 10 requests / 5 min

IP: 30 requests / 5 minFor this project scope, these values are usually practical. In larger shared networks (university, office, public WiFi), the same IP can represent many legitimate users, so IP limits should be tuned with real traffic data.

Sliding Window for Accuracy

Instead of fixed windows, I used a timestamp-log sliding window:

window.removeOldRequests(windowStart);

if (currentCount >= limit) {

return blocked;

}Benefits:

- Smoother request distribution

- No burst abuse at window boundaries

Implementation detail: this model stores recent request timestamps per key (rather than just a counter). That gives better precision, but memory usage grows with active keys and request volume, so cleanup and sensible per-endpoint limits are important.

Additional Production Considerations

1. Endpoint-Based Isolation

key = bucketType + ":" + identifier + ":" + endpointType;Each feature has its own rate limit, so activity in one area does not affect another.

2. Safe IP Extraction

X-Forwarded-For

X-Real-IPOnly trust these headers when traffic can only reach your app through a known proxy/load balancer that sanitizes forwarding headers. Do not blindly trust the left-most value. In multi-proxy setups, evaluate the chain against your trusted proxy list and derive the client as the first non-trusted hop from the right (or use your platform's vetted real-IP mechanism). If that trust model is not guaranteed, fall back to the direct remote address.

3. Response Headers

X-RateLimit-Limit

X-RateLimit-Remaining

X-RateLimit-ResetThese help clients understand their quota and behave more predictably.

What This Still Doesn't Solve

Even this approach has limitations:

- Fingerprints are not true identity

- Headers can still be manipulated

- Distributed attacks can bypass dual-bucket protections by spreading traffic across many IPs and fingerprints

More advanced systems use:

- User-based limits

- API keys

- Device fingerprinting

- Behavioral analysis

- CDN/WAF bot mitigation and challenge flows at the edge

Key Takeaway

The real problem was never rate limiting.

It was identity.

Who is making the request?

- IP alone is inaccurate

- Fingerprint alone is weak

- Combining both gives a practical baseline that should be tuned and hardened for context

Closing Thought

Rate limiting looks simple until you deal with real-world traffic. Small assumptions, like equating IP with user, can quietly turn into production issues.

Designing a good rate limiter is less about counting requests and more about balancing fairness with abuse resistance.

Code Reference

If you're interested in the implementation details, you can check out the full code here: GitHub repository.

This was a small shift in thinking, but it significantly improved how I approach backend system design.